Treefit - Getting started¶

This document is a user-friendly manual on how to use the Treefit package, which is the first toolkit for quantitative trajectory inference using single-cell gene expression data. In this tutorial, we demonstrate how to generate and analyze two kinds of toy data with the aim to help get familiar with the practical workflow of Treefit.

1. Generating toy data¶

While the Treefit package has been developed to help biologists who wish to perform trajectory inference from single-cell gene expression data, Treefit can also be viewed as a toolkit to generate and analyze a point cloud in d-dimensional Euclidean space (i.e., simulated gene expression data).

Treefit provides some useful functions to generate artificial

datasets. For example, as we will now demonstrate, the function

treefit.data.generate_2d_n_arms_star_data() creates data

that approximately fit a star tree with the number of arms or

branches; the term star means a tree that looks like the star symbol

“*”; for example, the alphabet letters Y and X can be viewed as star

trees that have three and four arms, respectively.

The rows and columns of the generated data correspond to data points (i.e., n single cells) and their features (i.e., expression values of d different genes), respectively. The Treefit package can be used to analyze both raw count data and normalized expression data, but regarding the production of toy data, it is meant to be used to generate continuous data like normalized expression values.

Importantly, we can generate data with a desired level of noise by

changing the value of the fatness parameter of this function. For

example, if you set the fatness parameter to 0.0 then you will

get precisely tree-like data without noise. By contrast, setting the

fatness to 1.0 gives very noisy data that are no longer

tree-like. In this tutorial, we deal with two types of toy data whose

fatness values are 0.1 and 0.8, respectively. We note that

Treefit can be used to generate and analyze high dimensional datasets

but we focus on generating 2-dimensional data to make things as simple

as possible in this introductory tutorial.

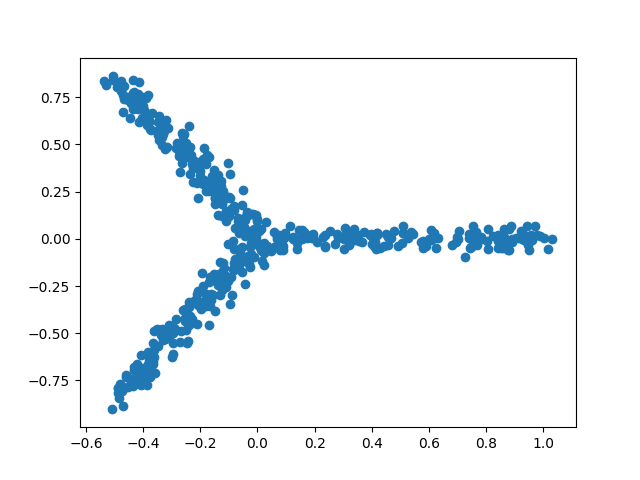

1.1. Tree-like toy data¶

Let us first generate 2-dimensional tree-like data that contain 500 data points and reasonably fit a star tree with three arms. We can create such data and draw a scatter plot of them (Figure 1) simply by executing the following two lines of code:

In [1]: import matplotlib.pyplot as plt

In [2]: import treefit

In [3]: star_tree_like = treefit.data.generate_2d_n_arms_star_data(500, 3, 0.1)

In [4]: plt.figure();

In [5]: plt.scatter(star_tree_like[:, 0],

...: star_tree_like[:, 1]);

...:

Figure 1. A scatter plot of tree-like toy data generated by Treefit

1.2. Less tree-like, noisy toy data¶

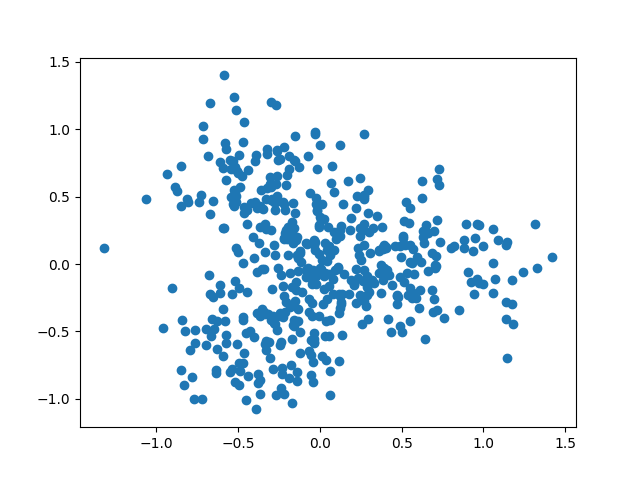

Similarly, we can generate noisy data that do not look so tree-like as

the previous one. In this tutorial, we simply change the value of the

fatness parameter from 0.1 to 0.8 in order to obtain

non-tree-like data. The following two lines of code yields a scatter

plot shown in Figure 2:

In [6]: star_less_tree_like = treefit.data.generate_2d_n_arms_star_data(500, 3, 0.8)

In [7]: plt.figure();

In [8]: plt.scatter(star_less_tree_like[:, 0],

...: star_less_tree_like[:, 1]);

...:

Figure 2. A scatter plot of noisy toy data generated by Treefit

2. Analyzing the data¶

Having generated two datasets whose tree-likeness are different, we can analyze each of them. The Treefit package allows us to estimate how well the data can be explained by tree models and to predict how many “principal paths” there are in the best-fit tree. As shown in Figure 1, the points in the first data clearly form a shape like the letter “Y” and so the data are considered to fit a star tree with three arms very well, whereas Figure 2 indicates that the second data are no longer very tree-like because of the high level of added noise. Our goal in this tutorial is to reproduce these conclusions by using Treefit.

2.1. Tree-like toy data¶

Let us estimate the goodness-of-fit between tree models and the first

toy data. This can be done by using treefit.treefit() as

follows. The name parameter is optional but we should specify it,

if possible. Because it’s useful to identify the estimation.

In [9]: fit_tree_like = treefit.treefit({'expression': star_tree_like},

...: name='tree-like')

...:

In [10]: print(fit_tree_like)

treefit.fit.Fit: tree-like

max_cca_distance:

p mean standard_deviation

0 1 0.525533 0.256161

1 2 0.056173 0.012468

2 3 0.048170 0.006462

3 4 0.046294 0.006131

4 5 0.043460 0.005851

5 6 0.042430 0.005762

6 7 0.040809 0.005367

7 8 0.038870 0.005620

8 9 0.037796 0.005563

9 10 0.036458 0.005144

10 11 0.035436 0.004998

11 12 0.034550 0.004864

12 13 0.033489 0.004939

13 14 0.033105 0.004799

14 15 0.032704 0.004782

15 16 0.031995 0.004899

16 17 0.031337 0.004575

17 18 0.030109 0.005185

18 19 0.029680 0.005192

19 20 0.029223 0.004962

rms_cca_distance:

p mean standard_deviation

0 1 0.525533 0.256161

1 2 0.069504 0.012882

2 3 0.088734 0.009029

3 4 0.252361 0.081862

4 5 0.126856 0.013084

5 6 0.141515 0.012029

6 7 0.221383 0.055702

7 8 0.180711 0.022460

8 9 0.210041 0.024030

9 10 0.230481 0.026864

10 11 0.244004 0.040005

11 12 0.283121 0.027968

12 13 0.296309 0.027656

13 14 0.330686 0.031417

14 15 0.334938 0.030241

15 16 0.359512 0.021286

16 17 0.357224 0.029239

17 18 0.390234 0.019264

18 19 0.402841 0.019841

19 20 0.404040 0.016546

n_principal_paths_candidates:

[3, 6, 9, 18]

treefit.treefit() returns a treefit.fit.Fit

object that summarizes the analysis of Treefit. We will explain how to

interpret the results in the next section. For now, we may focus on

learning how to use Treefit.

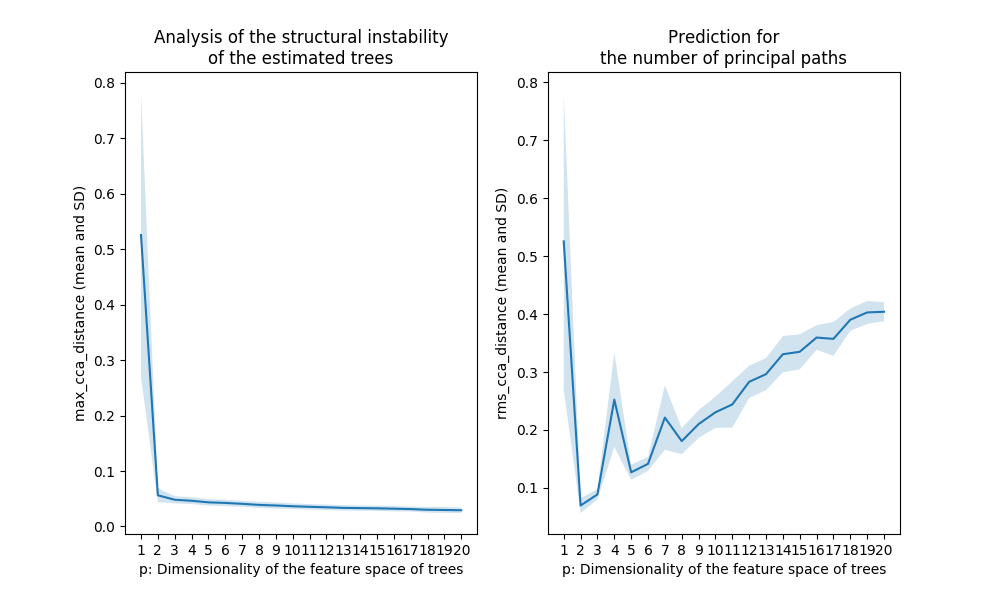

As we will see later, it is helpful to visualize the results using

treefit.plot(). By executing treefit.plot(fit_tree_like),

we can obtain the following two user-friendly visual plots, which

makes it easier to interpret the results of the Treefit analysis.

In [11]: treefit.plot(fit_tree_like)

Figure 3. The output for the tree-like data shown in Figure 1

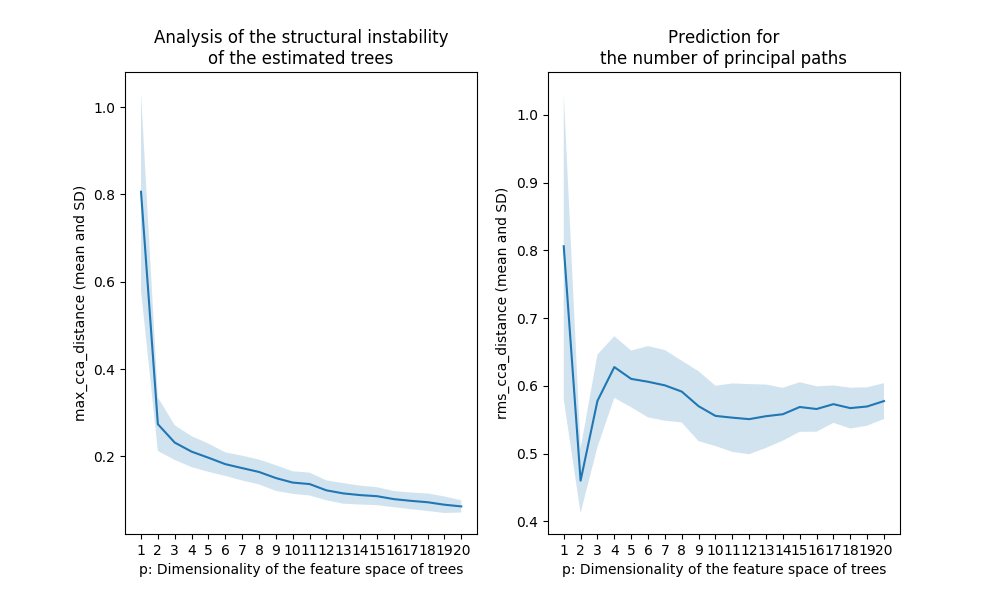

2.2. Less tree-like, noisy toy data¶

We can analyze the second toy data in the same manner.

In [12]: fit_less_tree_like = \

....: treefit.treefit({'expression': star_less_tree_like},

....: name='less-tree-like')

....:

In [13]: print(fit_less_tree_like)

treefit.fit.Fit: less-tree-like

max_cca_distance:

p mean standard_deviation

0 1 0.805926 0.226182

1 2 0.273711 0.061454

2 3 0.231613 0.039771

3 4 0.210874 0.035432

4 5 0.196933 0.032405

5 6 0.182219 0.026901

6 7 0.173168 0.028497

7 8 0.164329 0.028447

8 9 0.150368 0.029421

9 10 0.139997 0.025840

10 11 0.136604 0.026209

11 12 0.122230 0.022835

12 13 0.115212 0.023572

13 14 0.111331 0.021646

14 15 0.108842 0.020588

15 16 0.102107 0.018567

16 17 0.098205 0.019104

17 18 0.095000 0.020071

18 19 0.089316 0.018986

19 20 0.085445 0.014010

rms_cca_distance:

p mean standard_deviation

0 1 0.805926 0.226182

1 2 0.460137 0.047803

2 3 0.577808 0.068314

3 4 0.627684 0.045517

4 5 0.610238 0.041886

5 6 0.606020 0.052572

6 7 0.600699 0.052121

7 8 0.591505 0.045530

8 9 0.569942 0.051620

9 10 0.555651 0.044463

10 11 0.553027 0.050516

11 12 0.550825 0.051761

12 13 0.555187 0.046762

13 14 0.558086 0.038971

14 15 0.568755 0.036608

15 16 0.565789 0.033530

16 17 0.572950 0.027621

17 18 0.567119 0.030153

18 19 0.569511 0.028331

19 20 0.577514 0.026444

n_principal_paths_candidates:

[3, 13, 17, 19]

In [14]: treefit.plot(fit_less_tree_like)

Figure 4. The output for the noisy data shown in Figure 2

2.3. Comparing two results¶

We can compare different results by passing all results to

treefit.plot() as follows:

In [15]: treefit.plot(fit_tree_like, fit_less_tree_like)

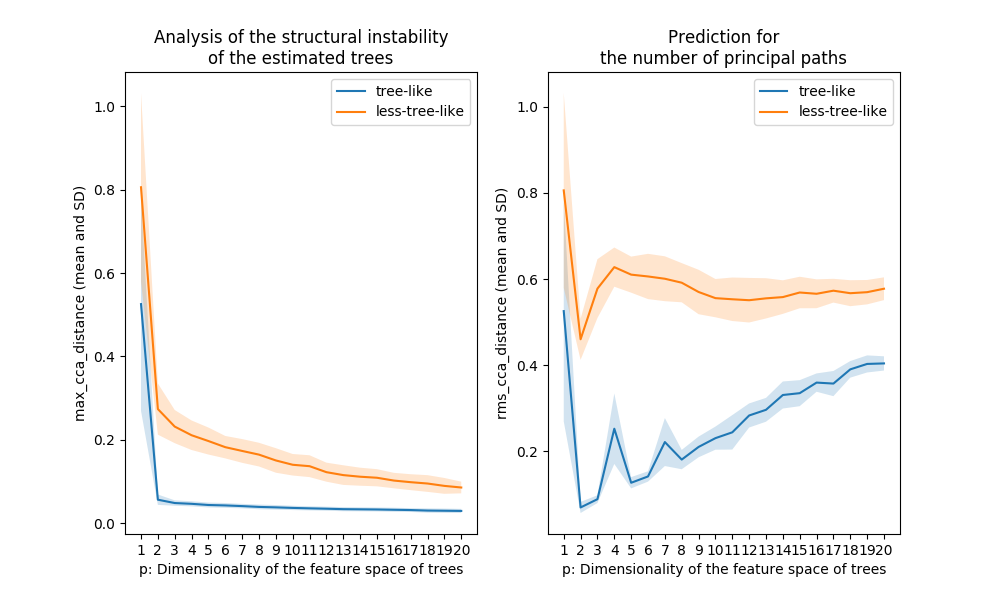

Figure 5. Comparison of the plots shown in Figure 3 and Figure 4

3. Interpreting the results¶

Before interpreting the previous results, we briefly summarize the process of the Treefit analysis that consists of the following three steps.

First, Treefit repeatedly “perturbs” the input data (i.e., adds some small noise to the original row count data or normalized expression data) in order to produce many slightly different datasets that may have been acquired in the biological experiment.

Second, for each dataset, Treefit calculates a distance matrix that represents the dissimilarities between sample cells and then constructs a tree from each distance matrix. The current version of Treefit computes a minimum spanning tree (MST) that has been widely used for trajectory inference.

Finally, Treefit evaluates the goodness-of-fit between the data and tree models. The underlying idea of this method is that the structure of trees inferred from tree-like data tends to have high robustness to noise, compared to non-tree-like data. Therefore, Treefit measures the mutual similarity between estimated trees in order to check the stability of the tree structures. To this end, Treefit constructs a <i>p</i>-dimensional subspace that extracts the main features of each tree structure and then measuring mutual similarities between the subspaces by using a special type of metrics called the Grassmann distance. In principle, when the estimated trees are mutually similar in their structure, the mean and standard deviation (SD) of the Grassmann distance are small.

Although the word “Grassmann distance” may sound so unfamiliar to some

readers, the concept appears in different disguises in various

practical contexts. For example, the Grassmann distance has a close

connection to canonical correlation analysis (CCA). Treefit provides

two Grassmann distances treefit.fit.Fit.max_cca_distance and

treefit.fit.Fit.rms_cca_distance that can be used for different

purposes as we now explain.

3.1. Estimating the tree-likeness of data¶

The Treefit analysis using the first Grassmann distance

treefit.fit.Fit.max_cca_distance (shown in the left panel of

Figure 5) tells us the goodness-of-fit between data and tree

models. In principle, as mentioned earlier, if the mean and SD of

treefit.fit.Fit.max_cca_distance are small, then this means that

the estimated trees are mutually similar in their structure. As can be

observed, the distance changes according to the dimensionality p of

the feature space, but treefit.fit.Fit.max_cca_distance has the

property that the value decreases monotonically as p increases

for any datasets.

Comparing the Treefit results for the two datasets, we see that the mean Grassmann distance for the first data does not fall below the second one regardless of the value of p and that the SD of the Grassmann distance for the first data is very small compared to the second data. These results imply that the estimated tree structures are very robust to noise in the first case but not in the second case. Thus, Treefit has verified that the first data are highly tree-like while the second data are not.

3.2. Predicting the number of principal paths in the underlying tree¶

The Treefit analysis using the other Grassmann distance

treefit.fit.Fit.rms_cca_distance (shown in the right panel of

Figure 5) is useful to infer the number of “principal paths” in the

best-fit tree. From a biological perspective, this analysis can be

used to discover a novel or unexpected cell type from single-cell gene

expression.

Unlike the previous Grassmann distance, the mean value of

treefit.fit.Fit.rms_cca_distance can fluctuate depending on the

value of p. Interestingly, we can predict the number of principal

paths in the best-fit tree by exploring for which p the distance

value reaches “the bottom of a valley” (i.e., attains a local

minimum). More precisely, when treefit.fit.Fit.rms_cca_distance

attains a local minimum at a certain p, the value p +1 indicates

the number of principal paths in the best-fit

tree. treefit.fit.Fit.n_principal_paths_candidates has these p +1

values. We don’t need to calculate them manually. When Treefit

produces a plot having more than one valleys, the smallest p is

usually most informative for the prediction. The smallest p +1 value

can be obtained by treefit.fit.Fit.n_principal_paths_candidates[0].

Comparing the Treefit results for the two datasets, we first see that both plots attains a local minimum at p =2. This means that for both datasets the best-fit tree has p +1=3 principle paths, which is correct because both were generated from the same star tree with three arms. Another important point to be made is that the SD of the Grassmann distance for the first data is very small at p =2 compared to that for the second data; in other words, Treefit made this prediction more confidently for the first dataset than for the second one. This result is reasonable because the first dataset is much less noisy than the second one. Thus, Treefit has correctly determined the number of principal paths in the underlying tree together with the goodness-of-fit for each dataset.